|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Graph generation is a critical task in diverse fields like molecular design and social network analysis, owing to its capacity to model intricate relationships and structured data. Despite recent advances, many graph generative models heavily rely on adjacency matrix representations. While effective, these methods can be computationally demanding and often lack flexibility, making it challenging to efficiently capture the complex dependencies between nodes and edges, especially for large and sparse graphs. Current approaches, including diffusion-based and auto-regressive models, encounter difficulties in terms of scalability and accuracy, highlighting the need for more refined solutions.

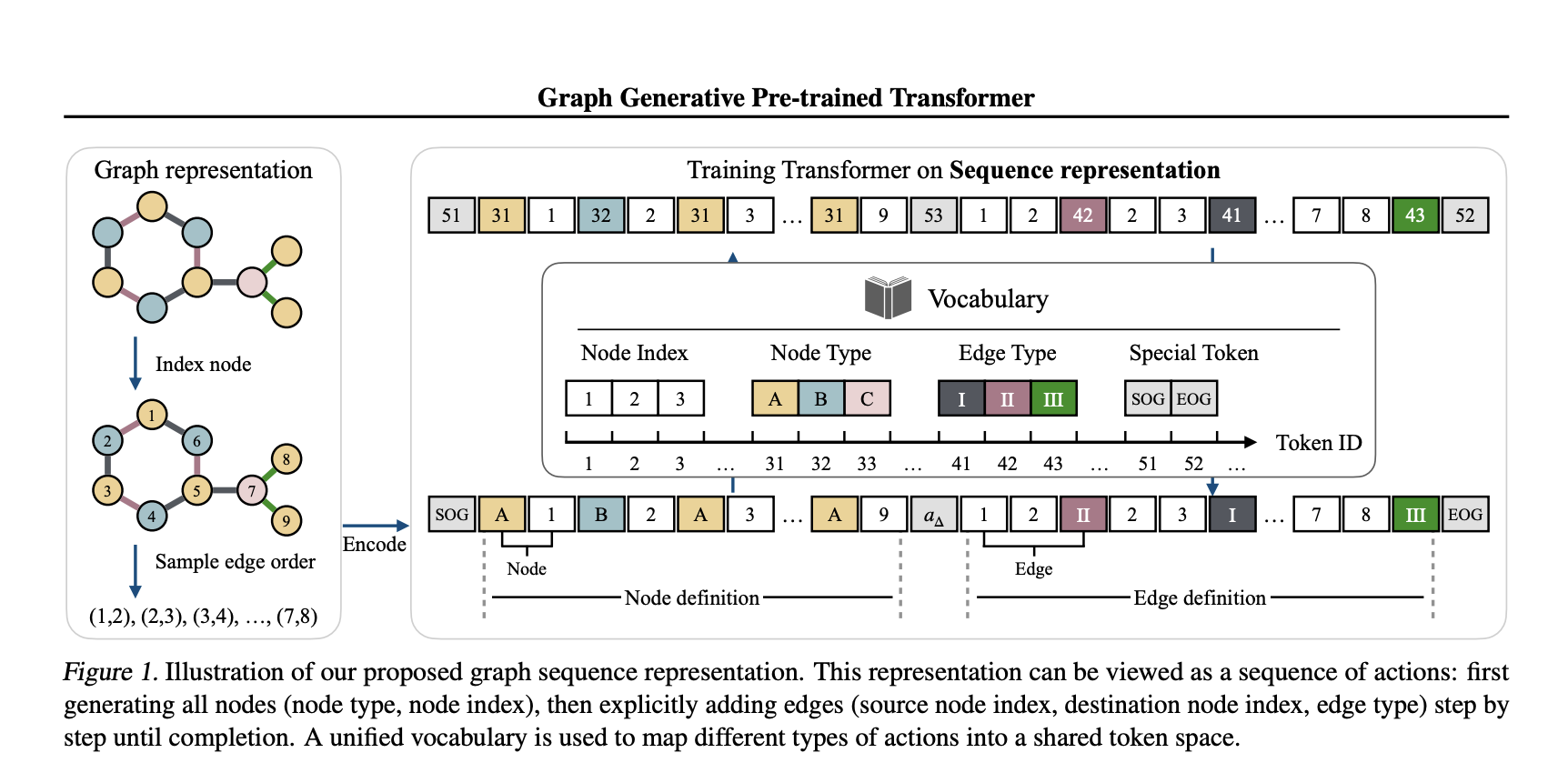

In a recent study, a team of researchers from Tufts University, Northeastern University, and Cornell University introduces the Graph Generative Pre-trained Transformer (G2PT), an auto-regressive model designed to learn graph structures through next-token prediction. Unlike traditional methods, G2PT employs a sequence-based representation of graphs, encoding nodes and edges as sequences of tokens. This approach streamlines the modeling process, making it more efficient and scalable. By leveraging a transformer decoder for token prediction, G2PT generates graphs that maintain structural integrity and flexibility. Moreover, G2PT can be readily adapted to downstream tasks, such as goal-oriented graph generation and graph property prediction, serving as a versatile tool for various applications.

Technical Insights and Benefits

G2PT introduces a novel sequence-based representation that decomposes graphs into node and edge definitions. Node definitions specify indices and types, whereas edge definitions outline connections and labels. This approach fundamentally differs from adjacency matrix representations, which focus on all possible edges, by considering only the existing edges, thereby reducing sparsity and computational complexity. The transformer decoder effectively models these sequences through next-token prediction, offering several advantages:

The researchers also explored fine-tuning methods for tasks like goal-oriented generation and graph property prediction, broadening the model’s applicability.

Experimental Results and Insights

G2PT has been evaluated on various datasets and tasks, demonstrating strong performance. In general graph generation, it matched or exceeded the state-of-the-art performance across seven datasets. In molecular graph generation, G2PT achieved high validity and uniqueness scores, reflecting its ability to accurately capture structural details. For instance, on the MOSES dataset, G2PTbase attained a validity score of 96.4% and a uniqueness score of 100%.

In a goal-oriented generation, G2PT aligned generated graphs with desired properties using fine-tuning techniques like rejection sampling and reinforcement learning. These methods enabled the model to adapt its outputs effectively. Similarly, in predictive tasks, G2PT’s embeddings delivered competitive results across molecular property benchmarks, reinforcing its suitability for both generative and predictive tasks.

Conclusion

The Graph Generative Pre-trained Transformer (G2PT) represents a thoughtful step forward in graph generation. By employing a sequence-based representation and transformer-based modeling, G2PT addresses many limitations of traditional approaches. Its combination of efficiency, scalability, and adaptability makes it a valuable resource for researchers and practitioners. While G2PT shows sensitivity to graph orderings, further exploration of universal and expressive edge-ordering mechanisms could enhance its robustness. G2PT exemplifies how innovative representations and modeling approaches can advance the field of graph generation.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

🚨 FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence–Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

免責聲明:info@kdj.com

所提供的資訊並非交易建議。 kDJ.com對任何基於本文提供的資訊進行的投資不承擔任何責任。加密貨幣波動性較大,建議您充分研究後謹慎投資!

如果您認為本網站使用的內容侵犯了您的版權,請立即聯絡我們(info@kdj.com),我們將及時刪除。

-

- 稀有發現:2P硬幣錯誤價值1,000英鎊!

- 2025-07-01 14:30:12

- 尋找具有“新彭斯”的1983年2p硬幣 - 值得一提!了解如何發現這個有價值的錯誤並可能現金。

-

-

- 德國銀行,加密貨幣交易和FOMO:一個新時代?

- 2025-07-01 14:35:12

- 在客戶需求和監管變化的推動下,德國銀行正在加熱加密交易。 FOMO踢了嗎?讓我們潛水。

-

-

- ETF批准,加密和機構投資:新時代?

- 2025-07-01 15:10:12

- 探索潛在的Altcoin ETF批准對加密貨幣的機構投資的影響,重點是Solana和更廣泛的市場趨勢。

-

- 比特幣突破進入?七月的模式暗示了歷史性的集會!

- 2025-07-01 14:50:12

- 比特幣正在準備重大突破嗎? 7月的歷史模式和當前的市場動態表明了潛在的集會。深入分析!

-

-

- Pengu價格集會是由鯨魚購買的:即將突破嗎?

- 2025-07-01 15:15:12

- Pengu正在經歷一場價格集會,這是由大量鯨魚購買和積極的市場情緒驅動的。它會破壞$ 0.015的電阻嗎?

-

- 康涅狄格州的加密詩:禁止數字資產投資

- 2025-07-01 15:30:12

- 康涅狄格州雄心勃勃的趨勢,禁止國家對數字資產的投資。這是謹慎還是錯過的機會的跡象?