|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Articles d’actualité sur les crypto-monnaies

G2PT: Graph Generative Pre-trained Transformer

Jan 06, 2025 at 04:21 am

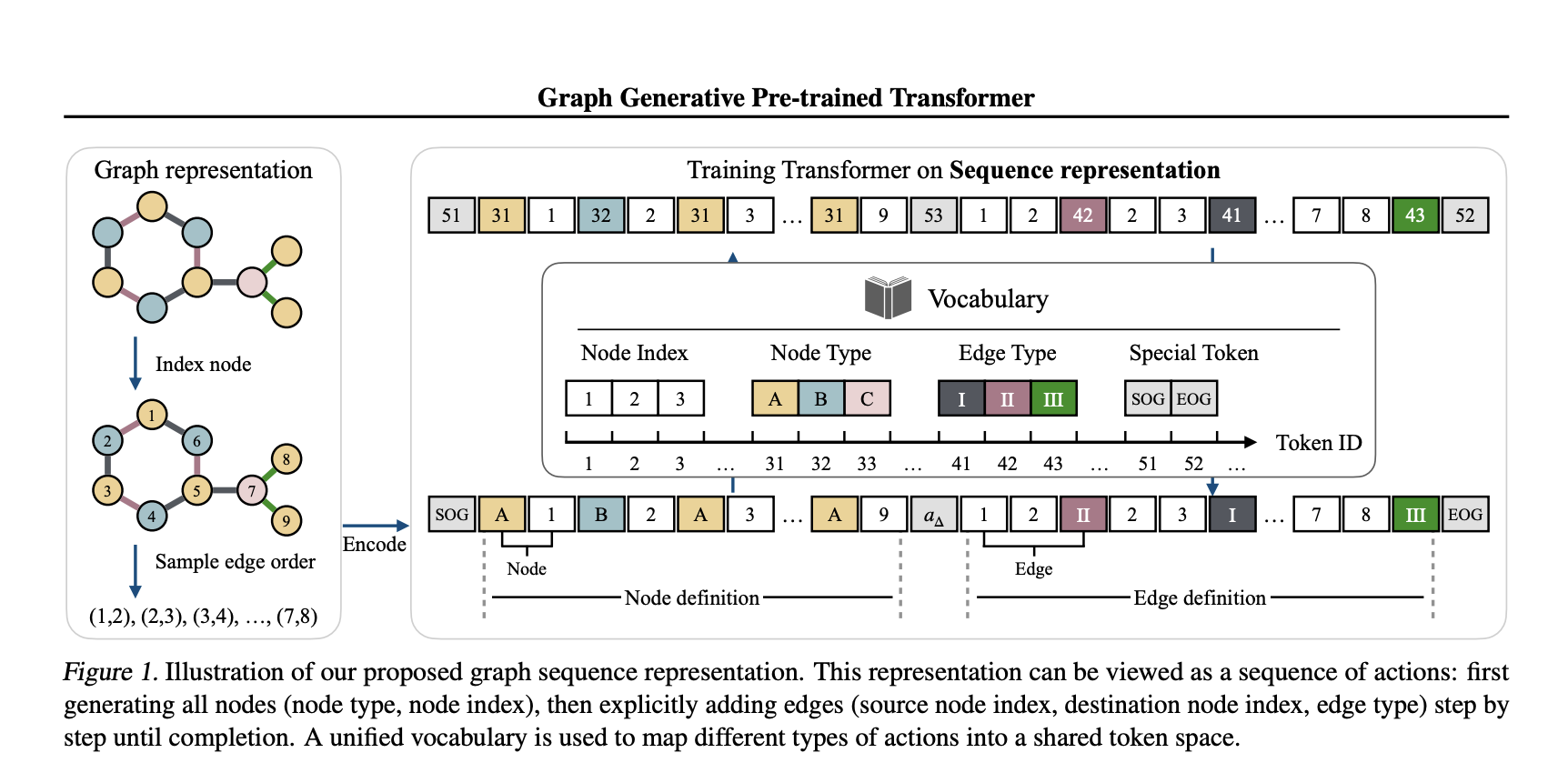

Graph generation is a critical task in diverse fields like molecular design and social network analysis, owing to its capacity to model intricate relationships and structured data. Despite recent advances, many graph generative models heavily rely on adjacency matrix representations. While effective, these methods can be computationally demanding and often lack flexibility, making it challenging to efficiently capture the complex dependencies between nodes and edges, especially for large and sparse graphs. Current approaches, including diffusion-based and auto-regressive models, encounter difficulties in terms of scalability and accuracy, highlighting the need for more refined solutions.

In a recent study, a team of researchers from Tufts University, Northeastern University, and Cornell University introduces the Graph Generative Pre-trained Transformer (G2PT), an auto-regressive model designed to learn graph structures through next-token prediction. Unlike traditional methods, G2PT employs a sequence-based representation of graphs, encoding nodes and edges as sequences of tokens. This approach streamlines the modeling process, making it more efficient and scalable. By leveraging a transformer decoder for token prediction, G2PT generates graphs that maintain structural integrity and flexibility. Moreover, G2PT can be readily adapted to downstream tasks, such as goal-oriented graph generation and graph property prediction, serving as a versatile tool for various applications.

Technical Insights and Benefits

G2PT introduces a novel sequence-based representation that decomposes graphs into node and edge definitions. Node definitions specify indices and types, whereas edge definitions outline connections and labels. This approach fundamentally differs from adjacency matrix representations, which focus on all possible edges, by considering only the existing edges, thereby reducing sparsity and computational complexity. The transformer decoder effectively models these sequences through next-token prediction, offering several advantages:

The researchers also explored fine-tuning methods for tasks like goal-oriented generation and graph property prediction, broadening the model’s applicability.

Experimental Results and Insights

G2PT has been evaluated on various datasets and tasks, demonstrating strong performance. In general graph generation, it matched or exceeded the state-of-the-art performance across seven datasets. In molecular graph generation, G2PT achieved high validity and uniqueness scores, reflecting its ability to accurately capture structural details. For instance, on the MOSES dataset, G2PTbase attained a validity score of 96.4% and a uniqueness score of 100%.

In a goal-oriented generation, G2PT aligned generated graphs with desired properties using fine-tuning techniques like rejection sampling and reinforcement learning. These methods enabled the model to adapt its outputs effectively. Similarly, in predictive tasks, G2PT’s embeddings delivered competitive results across molecular property benchmarks, reinforcing its suitability for both generative and predictive tasks.

Conclusion

The Graph Generative Pre-trained Transformer (G2PT) represents a thoughtful step forward in graph generation. By employing a sequence-based representation and transformer-based modeling, G2PT addresses many limitations of traditional approaches. Its combination of efficiency, scalability, and adaptability makes it a valuable resource for researchers and practitioners. While G2PT shows sensitivity to graph orderings, further exploration of universal and expressive edge-ordering mechanisms could enhance its robustness. G2PT exemplifies how innovative representations and modeling approaches can advance the field of graph generation.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

🚨 FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence–Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

Clause de non-responsabilité:info@kdj.com

Les informations fournies ne constituent pas des conseils commerciaux. kdj.com n’assume aucune responsabilité pour les investissements effectués sur la base des informations fournies dans cet article. Les crypto-monnaies sont très volatiles et il est fortement recommandé d’investir avec prudence après une recherche approfondie!

Si vous pensez que le contenu utilisé sur ce site Web porte atteinte à vos droits d’auteur, veuillez nous contacter immédiatement (info@kdj.com) et nous le supprimerons dans les plus brefs délais.

-

-

-

-

- Les nouvelles récentes de Cardano (ADA) mettent en évidence des développements importants sur la chaîne signalant une confiance croissante des investisseurs

- Apr 26, 2025 at 07:20 pm

- La récente nouvelle de Cardano met en évidence des développements importants sur la chaîne signalant une confiance croissante des investisseurs. Plus de 20 millions de dollars de jetons ADA ont laissé des plateformes de trading

-

- Justin Sun, fondateur de la Blockchain Tron, a pris son compte sur le réseau des médias sociaux pour partager une déclaration surprenante sur le crypto natif de Tron et le principal Bitcoin de crypto-monnaie du monde.

- Apr 26, 2025 at 07:15 pm

- Sun a mis en évidence une corrélation de TRX à Bitcoin et l'a fièrement partagée dans son X Post.

-

- Le jeton d'hyperliquide détrône Tron dans la génération de frais

- Apr 26, 2025 at 07:15 pm

- Le marché de la cryptographie a connu un changement surprenant au cours des dernières 24 heures, car le jeton d'Hyperliquide a détrôné Tron dans la génération de frais pour la première fois depuis des semaines.

-

- Le prix Bitcoin (BTC) rebondit fortement, préparant le terrain pour une poussée vers 100 000 $

- Apr 26, 2025 at 07:10 pm

- Après avoir plongé à 85,3k $ pendant une période d'incertitude du marché, Bitcoin a montré une résilience remarquable. Le prix de la BTC est revenu à 94,3 000 $ en quelques jours, alimenté par les achats institutionnels et les intérêts de la vente au détail renouvelés.

-

-

- Le prix Bitcoin (BTC) détient au-dessus de 90 000 $ alors que le marché de la cryptographie récupère

- Apr 26, 2025 at 07:05 pm

- Le marché de la cryptographie a enregistré une reprise importante au cours de la semaine de finition à mesure que le sentiment du marché s'améliore. Bitcoin et Solana sont quelques-uns des majors les plus performantes

![Le trading est de suivre [Revue vidéo] Les commandes de pétrole brut Bitcoin Gold font des bénéfices! Le trading est de suivre [Revue vidéo] Les commandes de pétrole brut Bitcoin Gold font des bénéfices!](/uploads/2025/04/26/cryptocurrencies-news/videos/trading-follow-review-video-gold-bitcoin-crude-oil-profits/image-1.webp)